Data collection matrix (Figure 1):

The initial reason for creating this program was two reports by the Egale organization- one in 2011, one in 2021 – that used surveying to gather data on inclusivity, support, and mental health of 2SLGBGQI students in Canadian schools (Peter et al, 2021). The 25-30 minute surveys were done online and in-class and contained 57 items, including six open-ended questions for gathering qualitative data based on student perspective. 3558 participants provided usable data, and 39% identified as members of the 2SLGBTQI community.

In order to increase the likelihood of actual use taking place, to improve my credibility as an evaluator to stakeholders (Sanders, 1994), and to ensure that findings and data analysis are easy to understand and follow for stakeholders, I plan on using similar methods to gather data, and to report findings, analysis, and make recommendations (BetterEvaluation).

As determined in earlier steps, the priority of this program is improving the mental health of 2SLGBTQI students and creating more inclusive schools. As a result, the best way to determine success of the program is by gathering survey data from 2SLGBTQI students, other students in the same environment, and receiving feedback from program implementers (staff and teachers).

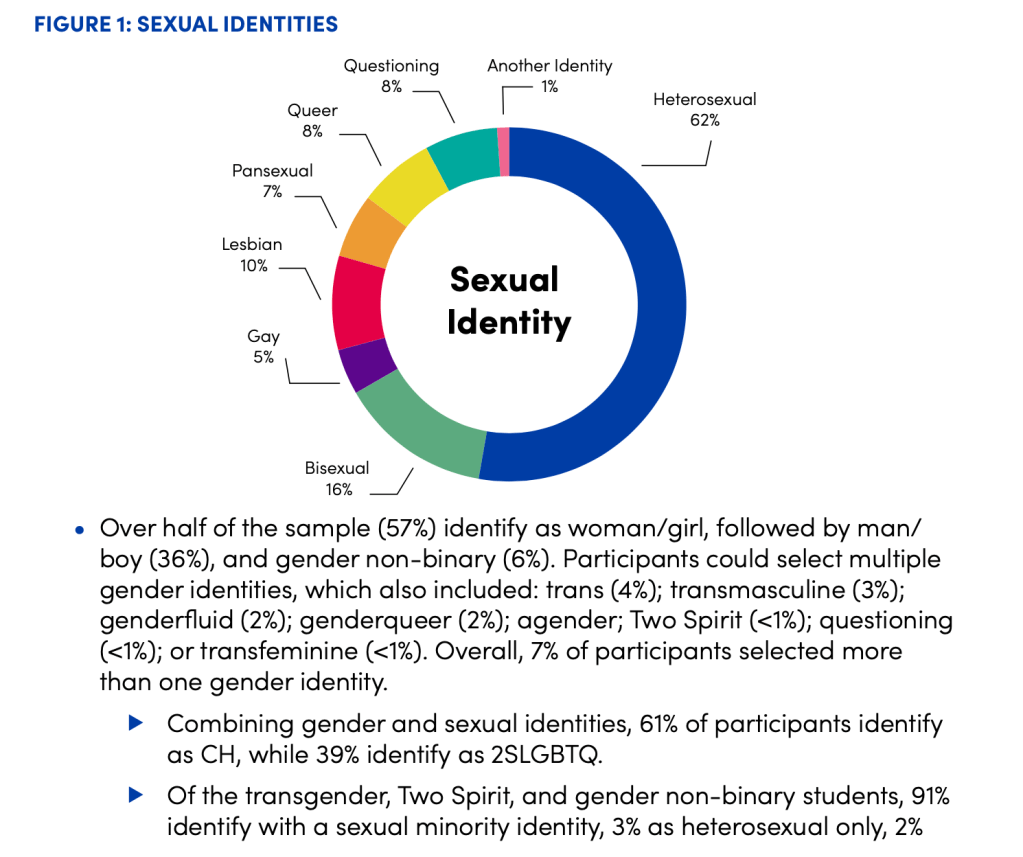

Below are examples of data analyzed and reported by Egale in the 2021 report (Peter et al, 2021). The data is presented in a graph or diagram, and an explanation, further information, and breakdown of data accompanies the visual.

The surveys done according to the data matrix (Figure 1) would include these and similar questions, be reported in a similar, visual format, and provide regular, updated evidence. Essentially, this evaluation aims to become an established part of the program: one which can help the program implementers improve their evaluative thinking – the conceptual change described by Weiss (1998) – and allow stakeholders to work closely with the evaluator (Patton, 2013) using their own methods applied in a regular, cyclical context. If the data shows the outcomes are being met, then the evaluation works to legitimize the program (Weiss, 1998), but if outcomes are not being met or see room for improvement, the program can be adjusted using up-to-date data.

Ideally, this evaluation can be expanded in a “holistic” sense to include formative, process, outcome, and impact evaluations (Chen, 2005). It should become an inherent part of the program design (Patton, 2013) and help the implementers and organization further develop their evaluative thinking (Alkin & Taut, 2003).

By using the data collection, analysis, and reporting methods of the organization, my hope would be that – rather than a report done every ten years – the organization would have an interest in constantly updating and assessing their program implementation by working with an evaluator to improve data collection and analysis that can lead to actual use (Patton, 2013).

Finally, the organization report from 2021 (Peter et al, 2021) emphasized the great effect their data collection and reporting in their 2011 report had in the education community overall. This included school systems implementing anti-bullying campaigns, demonstration of more inclusivity by teachers and students in Canada, and funding and cooperation from other organizations and the government of Ontario. Ideally, more data collection and more reporting will lead to those adjacent benefits as well, and demonstrate the benefit to the general population or adjacent communities – including program clients, the students – described by Weiss (1998).

References:

Peter, T., Campbell, C.P., & Taylor, C. (2021). Still every class in every school: Final report on the second climate survey on homophobia, biphobia, and transphobia in Canadian schools. Toronto, ON: Egale Canada Human Rights Trust.

Chen, H.-T. (2005). Practical program evaluation: Assessing and improving planning, implementation, and effectiveness. Thousand Oaks, CA: Sage Publications

Alkin, M. C., and Taut, S. (2003). Unbundling evaluation use. Studies in Educational Evaluation, 29, 1-12.

Patton, M. Q. (2013). Utilization-focused evaluation for equity-focused and gender-responsive evaluations. [Video]. YouTube. https://www.youtube.com/watch?v=jQP1FGhxloY

Weiss, C. H. (1998). Have we learned anything new about the use of evaluation? American Journal of Evaluation, 19, 21-33.

Sanders, James R. (1994). The Joint Committee on Standards for Educational Evaluation: The Program evaluation Standards (2nd ed.). Sage. [PDF]. Retrieved May 14, 2022, from https://www.oecd.org/dev/pgd/38406354.pdf

Develop reporting media. (2020, July 1). BetterEvaluation. https://www.betterevaluation.org/en/rainbow_framework/report_support_use/develop_reporting_media